Everything You Need to Manage AI Traffic

A production-grade, Rust-powered AISIX gateway designed for performance, governance, and observability.

High Performance

Blazing Fast, Built with Rust

Native Rust data plane delivers sub-millisecond proxy overhead with minimal memory footprint. Handle millions of requests per second without breaking a sweat.

Unified Governance

One API, All Your LLMs

Manage all your LLM providers through a single, OpenAI-compatible API. Centralized configuration, authentication, and policy enforcement across your entire AI stack.

Intelligent Load Balancing

Multi-LLM Load Balancing

Dynamically distribute traffic across multiple LLM providers based on latency, cost, and availability. Weighted round-robin, least-connections, and custom strategies.

Precise Rate Limiting

Token & Request Rate Limiting

Fine-grained rate limiting by tokens, requests, or custom dimensions. Per-consumer, per-route, and cluster-wide policies to control costs and prevent abuse.

Security Guardrails

Enterprise-Grade Security

Protect your AI pipeline with prompt injection detection, content moderation, PII redaction, and comprehensive audit logging for regulatory compliance.

Rich Observability

Full-Stack Observability

Track every token, monitor latency distributions, and analyze traffic patterns in real-time. Native integration with Prometheus, Grafana, and ClickHouse.

Production-Grade,

Cloud-Native Architecture

Control Plane and Data Plane separation ensures high scalability, zero-downtime upgrades, and enterprise-level reliability.

Stateless Data Plane

Horizontally scalable with zero state — add or remove nodes instantly without data migration.

etcd-Based Config

Centralized configuration management with real-time propagation and hot-reload capabilities.

Separation of Concerns

Control Plane handles management; Data Plane handles traffic. Independent scaling and upgrades.

Deploy in Minutes, Not Weeks

OpenAI-compatible API. Production-ready from day one.

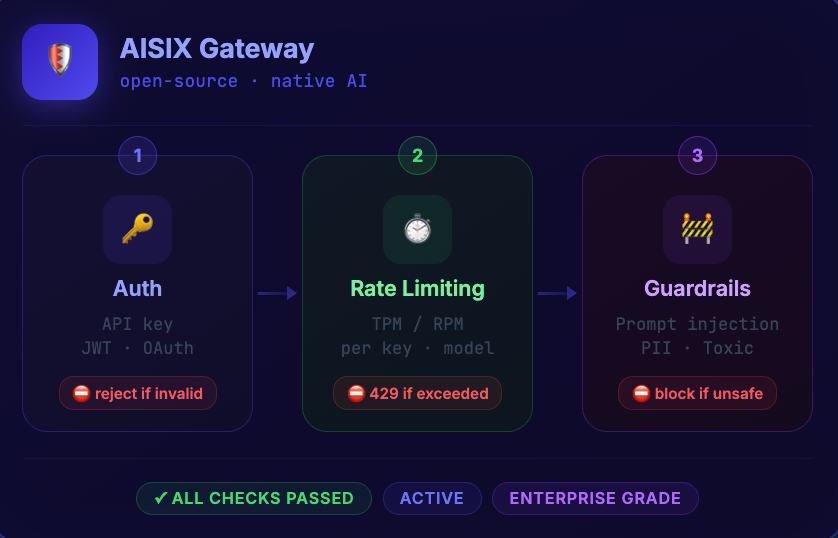

Enterprise-grade security built into every request

- TPM / RPM rate limiting — cap tokens-per-minute and requests-per-minute per key, per model

- Guardrails — block prompt injection, PII leakage, and toxic content before it reaches the model

- Per-key access control — restrict which models each API key can call

- Concurrency limits — prevent single consumers from monopolising capacity

100+ LLM Providers, One Gateway

Connect to any major LLM provider through a unified, OpenAI-compatible interface. No vendor lock-in, ever.

The Best Pricing Plans

Start free with our open-source core. Scale to enterprise when you're ready.

Open Source

Everything you need to get started with AISIX

- Connect 100+ LLM providers

- Load balancing + RPM/TPM limits

- Virtual key management

- OpenTelemetry, Prometheus, Jaeger

- Model guardrails

Enterprise

Cloud or Self-Hosted. For teams managing AI traffic at scale.

- Includes all open-source features

- Priority support + custom SLAs

- Budgets, teams, and RBAC

- Audit logs and compliance

- Complete enterprise features

Frequently Asked Questions

Common questions from platform engineers evaluating AI gateways.

An AI gateway is a reverse proxy that sits between your applications and LLM providers (OpenAI, Anthropic, Google Gemini, DeepSeek, etc.). It centralizes authentication, load balancing, rate limiting, observability, and security policies for all AI and LLM traffic — similar to how a traditional API gateway manages REST API traffic.

Learn More About AI Gateways

Explore documentation, comparisons, and guides to evaluate AISIX for your AI infrastructure.

AISIX Documentation

Quickstart guides, API references, deployment tutorials, and plugin documentation for the AISIX AI gateway.

View on GitHub

Explore the AISIX source code, file issues, and contribute to the open-source AI gateway project.

AI Gateway Guide (White Paper)

A comprehensive guide to AI gateways: architecture patterns, LLM traffic governance, and production deployment strategies.

AI Gateway vs MCP Gateway vs API Gateway

Understand the differences between AI gateways, MCP gateways, and traditional API gateways — and when to use each.

What Is an AI Gateway?

Deep dive into AI gateway architecture, use cases, and how it fits into your LLM infrastructure stack.

API Gateway Comparison

Compare AISIX against Kong, NGINX, Traefik, Tyk, and other API gateways across features, performance, and pricing.